If you analyse both the diagrams you will find that the mapping of nodes from a graph structure (non-euclidean or irregular domain) to an embedding space (figure B) is done in such a manner that the distances between nodes in the embedding space mirrors closeness in the original graph (preserving the structure of the node’s neighbourhood). In the below figure A) represents the Zachary Karate Club social network and B) illustrates the 2D visualisation of node embeddings created from the Karate graph using a DeepWalk method. The colouring in the graph represents different communities. In this graph, the nodes represent the persons and there exists an edge between the two persons if they are friends. Let’s understand this more intuitively with an interesting example from the graph structure of the Zachary Karate Club social network. Mapping Process in Graph Representation Learning (src: stanford-cs224w) Below diagram depicts the mapping process, encoder enc maps node u and v to low-dimensional vector zu and zv :

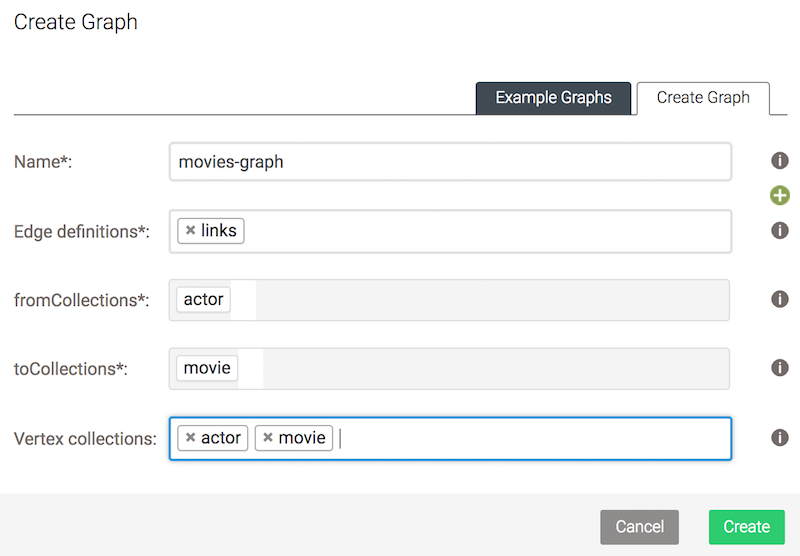

Therefore by doing this, we can preserve the geometric relationships of the original network inside the embedding space by learning a mapping function. The aim is to optimize this mapping so that nodes which are nearby in the original network should also remain close to each other in the embedding space (vector space), while shoving unconnected nodes apart. The key idea behind the graph representation learning is to learn a mapping function that embeds nodes, or entire (sub)graphs (from non-euclidean), as points in low-dimensional vector space (to embedding space). To tackle the above problem, representation learning approaches have been adopted to encode the structural information about the graphs into the euclidean space (vector/embedding space). However, with these methods we can not perform an end-to-end learning i.e features cannot be learned with the help of loss function during the training process. One way to extract structural information from the graph is to compute its graph statistics using node degrees, clustering coefficients, kernel functions or hand-engineered features to estimate local neighbourhood structures. information about the node’s global position in the graph or its local neighbourhood structure) into a machine learning model. The next question comes into mind is finding a way to integrate the information about graph structure (for e.g. Once the graph is created after incorporating meaningful relationships (edges) between all the entities (nodes) of the graph. This workshop digs deeper into the Importance of Graph Data Structures, Applications of Graph ML, Motivation behind Graph Representation Learning, How to use Graph ML in Production with Nvidia Triton Inference Server and ArangoDB using a real world application. I have conducted a Workshop on the topic “ Machine Learning on Graphs with PyTorch Geometric, NVIDIA Triton, and ArangoDB: Thinking Beyond Euclidean Space ”. Generating Graph Embeddings Visualisations and Observations.Open-Graph-Benchmark’s Amazon Product Recommendation Dataset.Getting Hands-on Experience with GraphSage and PyTorch Geometric Library.So in brief here is the outline of the blog: The goal is to predict the category of a product in a multi-class classification setup, where the 47 top-level categories are used for target labels making it a Node Classification Task. Nodes represent products sold in Amazon, and edges between two products indicate that the products are purchased together. We use ogbn-products dataset which is an undirected and unweighted graph, representing an Amazon product co-purchasing network to predict shopping preferences. For a practical application we are going to use the popular PyTorch Geometric library and Open-Graph-Benchmark dataset. This blogpost provides a comprehensive study on theoretical and practical understanding of GraphSage which is an inductive graph representation learning algorithm. A Comprehensive Case-Study of GraphSage with Hands-on-Experience using PyTorchGeometric Library and Open-Graph-Benchmark’s Amazon Product Recommendation Dataset Outline

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed